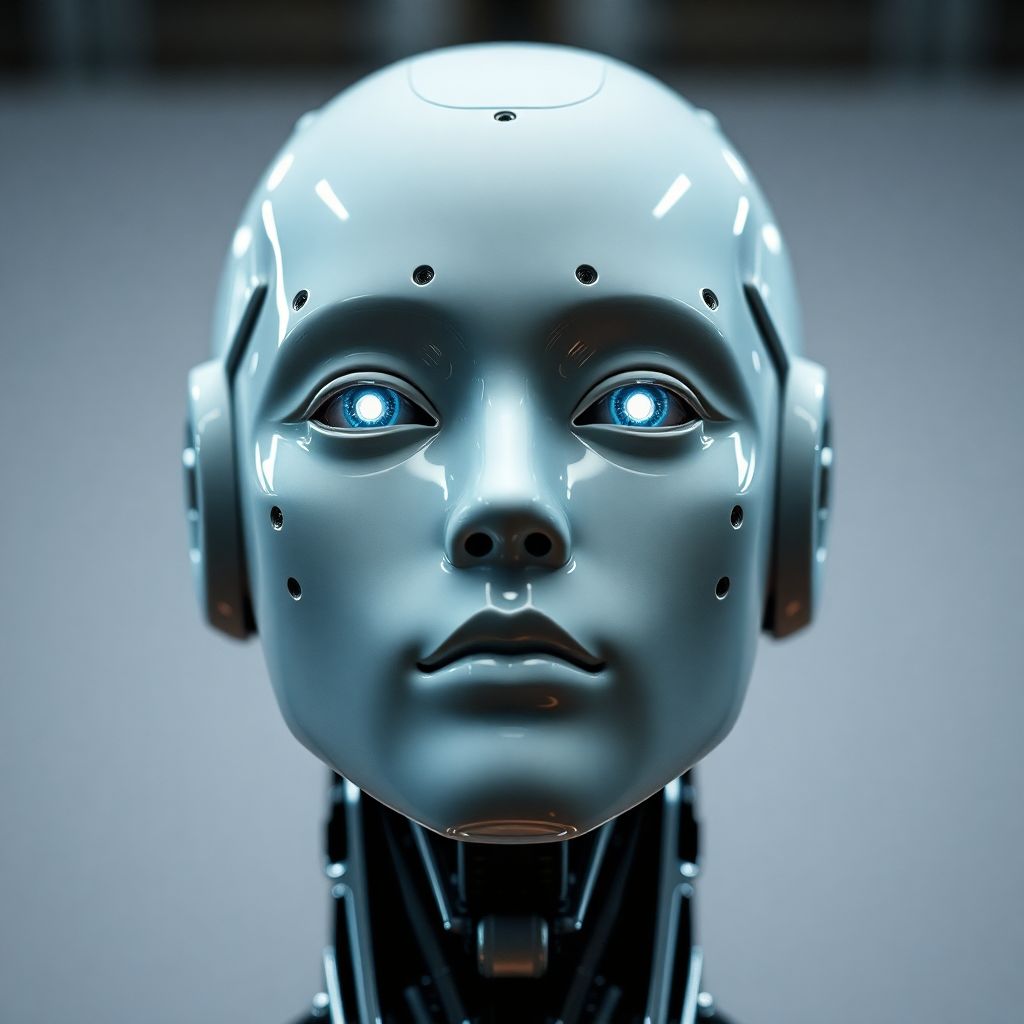

As robotic technology advances at an unprecedented pace, the line between machine and human is becoming increasingly blurred. A recent development by Chinese robotics company Aheadform is reigniting a long-standing psychological phenomenon known as the “uncanny valley.” Their ultra-realistic robotic head, dubbed Origin M1, has gone viral on social media, drawing both fascination and unease from viewers. The machine’s ability to blink, follow eye movement, and replicate subtle human expressions has sparked a visceral reaction among users, with many describing the experience as “eerie” and “disturbingly lifelike.”

Psychologists and roboticists have long studied the uncanny valley—the drop in comfort people feel when artificial beings appear almost, but not quite, human. The Origin M1 is a perfect case study. While its designers aimed to increase human-robot affinity through realism, the result appears to have had the opposite effect. The robot’s face moves with uncanny precision; it emulates micro-expressions that should, in theory, help build empathy. Instead, these features have triggered discomfort and even fear among some viewers.

This paradox illustrates a critical challenge in human-robot interaction: the assumption that the more humanlike a robot appears, the more we will accept it. Initial studies did show that people generally feel more at ease with robots that have familiar, human traits. However, once those traits reach a certain threshold—close enough to resemble real people but still perceptibly artificial—our brains rebel. This creates a psychological dissonance, where the robot is too human to be a machine but too machine-like to be human.

The reaction to the Origin M1 supports mounting evidence that hyper-realism in robotics can backfire. In fact, research from institutions like Stanford and MIT has demonstrated that people often prefer robots that are clearly non-human in appearance but exhibit friendly, predictable behaviors. These robots feel more trustworthy precisely because they don’t attempt to mimic human emotional nuances too closely.

Tesla’s Optimus, Figure 02, and Unitree’s G1 are also pushing toward humanlike robotics. These machines are designed to walk, gesture, and respond to humans in ways that mirror our own behavior. Yet, as these designs become more sophisticated, developers are encountering the same resistance from the public. The goal of making robots “relatable” may be undermined by the psychological discomfort their appearance evokes.

One reason this reaction is so strong is that human brains are wired to detect subtle cues in facial expressions. When we see a face that mimics these cues but lacks the natural fluidity and emotional depth of a real person, the result can be unsettling. This is especially true when the robot’s expressions fall just short of authenticity—smiles that don’t reach the eyes, or blinks that seem slightly off in timing.

Further complicating the issue is the emotional ambiguity that hyper-realistic robots present. Humans rely on facial expressions for social navigation, gauging whether someone is angry, happy, sad, or lying. When a robot mimics these expressions without the underlying emotional context, it creates confusion and mistrust. This disconnect may explain why people describe such robots as “creepy”—their faces suggest emotional presence, but their lack of true feeling betrays their artificial nature.

Despite the discomfort, there are potential benefits to realistic robots in certain contexts. In fields like eldercare, therapy, or education, humanlike machines could provide companionship or support. However, designers must tread carefully, balancing realism with clarity of purpose. Making robots look too human may inadvertently hinder their social acceptance rather than enhance it.

To address this, some developers are exploring alternative aesthetics. Instead of striving for perfect human mimicry, they are designing robots with stylized or minimalist features—clear indicators of artificiality paired with warm, approachable behavior. This approach not only avoids the uncanny valley but also encourages more transparent interaction between humans and machines.

The uncanny valley also raises ethical questions. As robots become more indistinguishable from humans, how do we ensure they are not used to deceive? Could lifelike robots be weaponized for manipulation, surveillance, or misinformation? These concerns are not hypothetical; they are already informing global conversations about AI regulation and robotics ethics.

Moreover, the emotional impact of humanoid robots may extend beyond individual discomfort. As we encounter more machines that look and act like people, our understanding of identity, empathy, and even morality may evolve. Will we begin to attribute consciousness to machines based solely on their appearance? Will our emotional bonds shift as we interact with increasingly lifelike artificial companions?

In the end, the Origin M1 is more than a viral curiosity—it is a glimpse into our future. It challenges us to reconsider what it means to be human and how we define authenticity in a world where machines can mirror our every move. As technology continues to advance, the question is not just whether we can make robots look human—but whether we should.

For developers and researchers, the message is clear: realism alone is not enough. Successful human-robot interaction depends not just on appearance, but on transparency, functionality, and emotional clarity. As we navigate this complex frontier, empathy—both human and artificial—will be the true measure of progress.